Engineering

Hoshō: A Database Activity Monitoring Tool

Pratik Anurag, Manisha C, Shubham Sharma, and Krishna Prasanth15 May, 2026

When processing billions of transactions in a system as large as PhonePe, underlying databases go through constant querying, repairs and management. While a set of these tasks are automated, some are managed by human users. This leaves a potential risk of unauthorised/untracked human activities being executed in the databases. PhonePe addresses this risk with a Database Activity Monitoring (DAM) system called Hoshō (Guarantee).

Hoshō is designed to support database security and compliance efforts. It is a reactive or post-event monitoring tool, which means it does not sit inline with database traffic or intercept queries in real time. Instead, it analyzes audit logs after the database query, providing analysis of all database activities, delivering near real-time alerts based on pre-defined user actions, and establishing reporting.

Hoshō is purpose-built for activity monitoring and audit compliance. Adjacent concerns such as database access control (who is allowed to connect), session management (connection pooling, session lifecycle) and privilege provisioning are handled by other dedicated tooling within PhonePe’s infrastructure.

The blog post provides an in-depth look at Hoshō detailing its functionality, architecture, and the technical decisions.

What Hoshō does

Hoshō’s core function is to act as a post-event monitoring tool that identifies and alerts on any activity that breaches predefined rule sets after the event has occurred. The tool analyzes audit logs that stream into it real time or up to a configurable historical period, runs them against the pre-defined rule set and raises alerts for the instances where the rules are breached. The scope of its monitoring includes:

- Queries Executed: Tracking every command and transaction performed on the database, SELECTs, INSERTs, UPDATEs, DELETEs, and DDL operations.

- Sensitive Data Access: Monitoring access patterns to critical and regulated information, with the ability to flag specific query strings and database targets.

Alerting and Event Consolidation

The tool provides configurable alerts and notifications via Slack, email, and phone calls. To prevent alert fatigue Hoshō uses an event consolidation strategy:

- Fingerprint-based Deduplication: Each database event is fingerprinted using a SHA256 hash computed from a composite key of several fields. Identical events within a configurable aggregation window are marked as duplicate, marking the first log as an alert.

- Whitelist Filtering: Known service accounts and system users patterns are filtered at the ingestion layer, eliminating noise from routine operational queries before they reach the filter phase.

- Query Type Filtering: Connection lifecycle events (CONNECT/DISCONNECT) and other non-state-changing operations are dropped early in the pipeline, based on the configuration. Lifecycle events are also used to identify if downstream hosts are sending the data to the tool and alert in case of a miss.

When multiple events of the same fingerprint show up, the system notifies on the first instance and consolidates the rest into a summary digest.

Plug-and-Play, Database-Agnostic Design

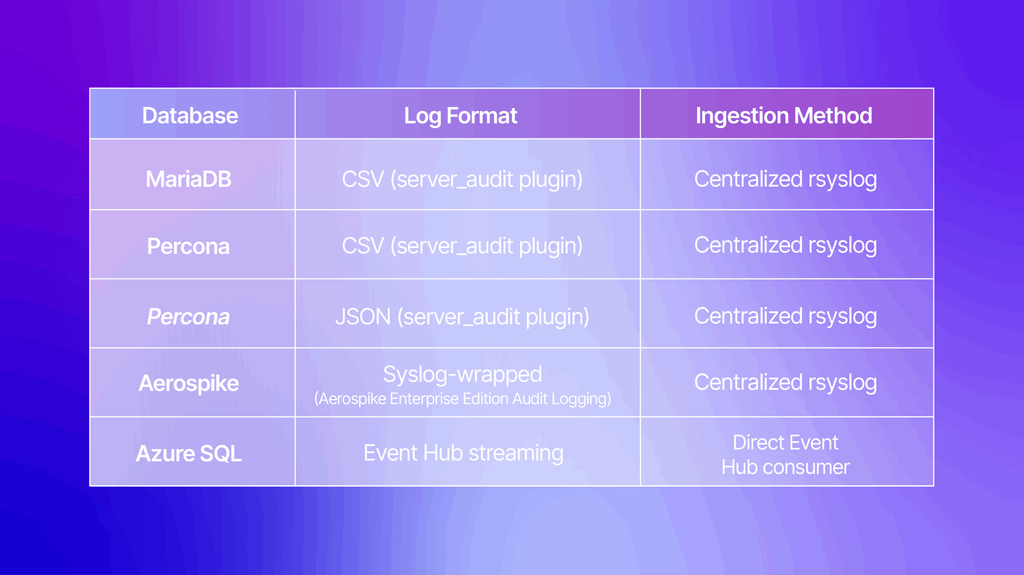

Hoshō is built as a highly flexible, plug-and-play model, currently supporting activity monitoring for:

Each database type has a dedicated Logstash pipeline with its own GROK patterns and parsing logic. Onboarding a new database technology requires defining a new pipeline configuration. The rest of the architecture (storage, alerting, visualization, approval workflows) works out of the box. Any user activity based system, such as HBase, ElasticSearch (when used for administration) can be plugged into Hoshō. Systems that have mutations based on events in general that they want to audit and approve, have integrated with the Hoshō stack.

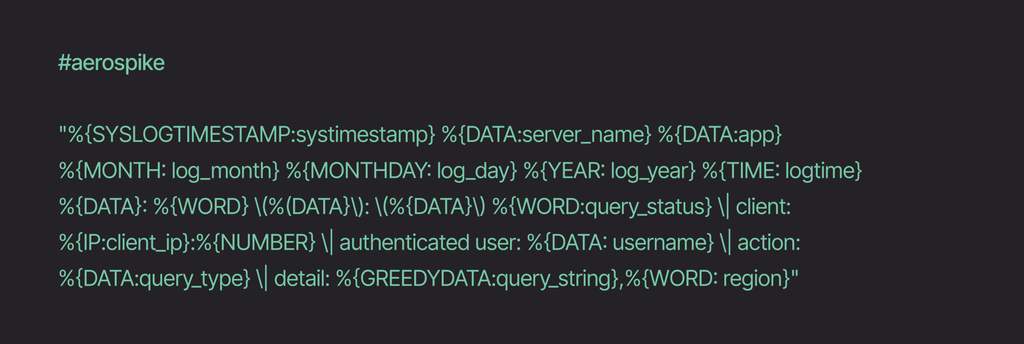

Though the premise for Hoshō to exist was to monitor database activity, the implementation was done in such a way that any user activity system/sub-system can be integrated with Hosho, point in case being the Aerospike integration. There are other such integrations existing in PhonePe that use Hosho that are entirely different technologies, but generate user specific activity that requires auditability.

Log-Based, Agentless Architecture

Since Hoshō operates on a log-based, post-event architecture, it works on a copy of the event/audit logs and does not require deployment on individual host machines, eliminating the performance overhead and operational complexity of host-based agents.

We preferred an agent-less architecture to have zero impact on database latency or availability. The database operations complete independently, and Hoshō processes the resulting audit trail asynchronously. Each component of this distributed system, ingestion, processing, storage, and visualization can be independently scaled to match throughput requirements.

Architecture: Three Pillars of Operation

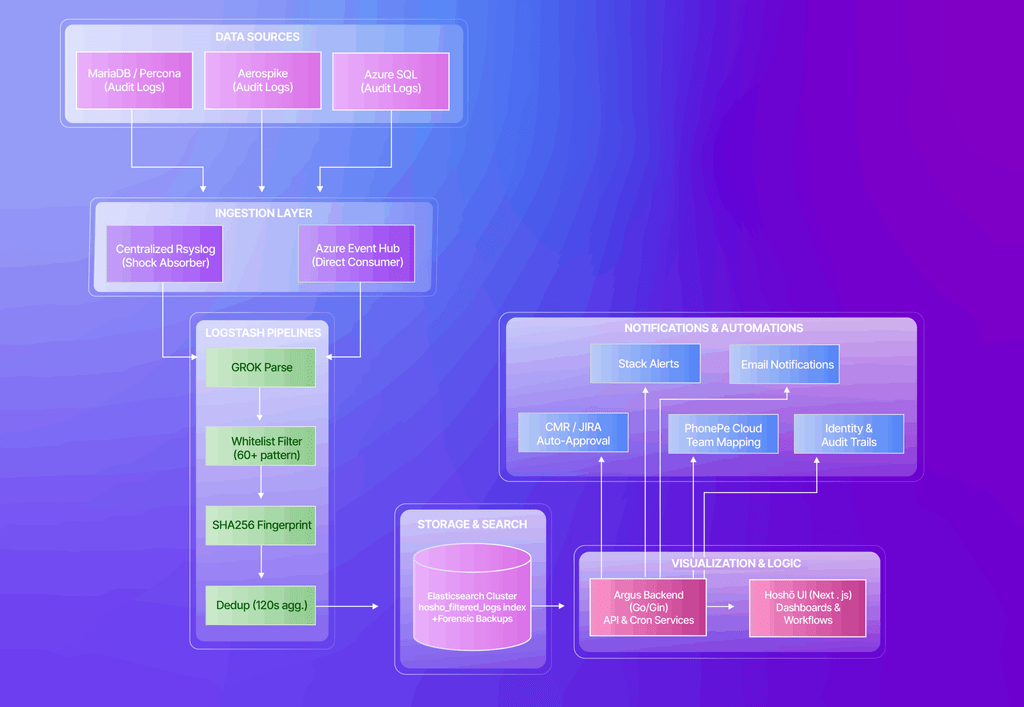

The Hoshō architecture is divided into three independently scalable and specialized layers: Ingestion, Processing & Visualization, and Post Processing.

1. Ingestion:

The first stage is responsible for collection and buffering of audit logs. This is also known as “The Funnel”.

For MySQL (MariaDB, Percona) and Aerospike, audit data from hundreds of database instances across multiple regions and VRFs are aggregated into respective centralized rsyslog servers. This server also acts as a shock absorber, if the downstream Logstash processing is paused for maintenance or experiences high load, the centralized rsyslog instance holds the buffered data, ensuring no audit trail is lost. All the logs from the centralized rsyslog server are streamed to another instance of rsyslog to be persisted for the next step. The ingestion layer also supports a feature to block specific hosts from forwarding their logs, useful for decommissioned or test hosts.

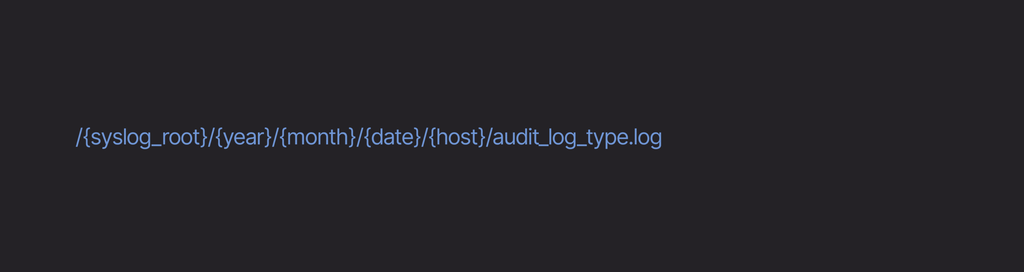

The rsyslog path structure follows a hierarchical convention:

For Azure-based databases, the system leverages the Azure Event Hub as a direct consumer. Instead of reading from a persisted folder structure, Logstash initiates an outbound connection to Azure to read the data stream from an Event Hub. It ignores other Event Hubs in the same namespace unless configured otherwise.

2. Processing and Visualization:

The Processing Pipeline

At the processing stage, logs are consumed by a set of Logstash pipelines, each for a specific database type. The pipeline performs the following operations:

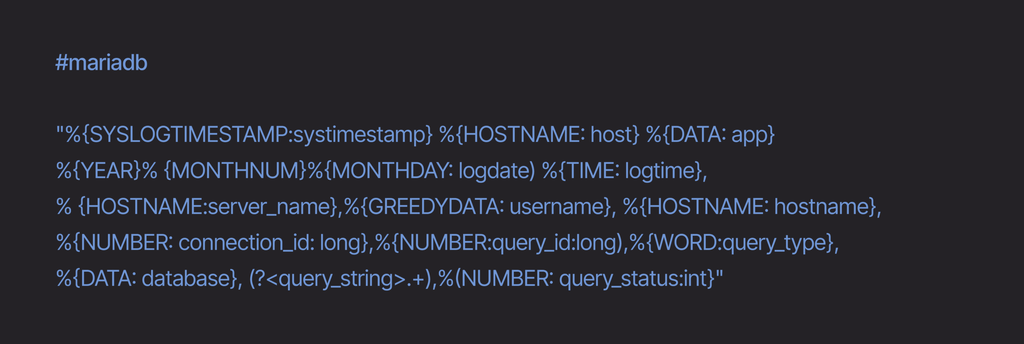

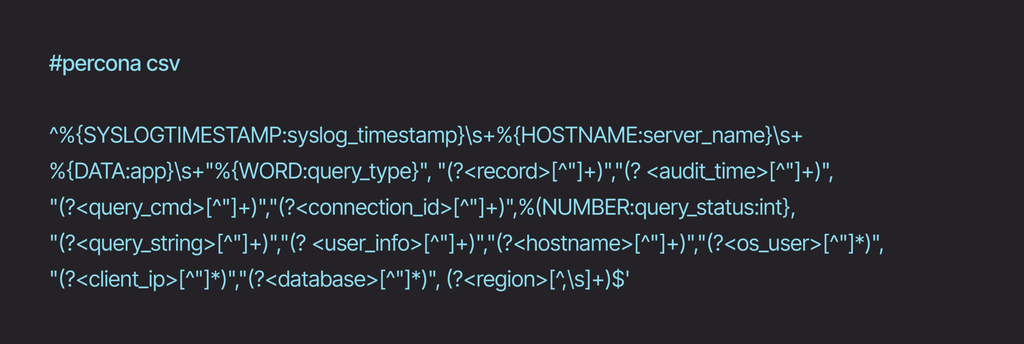

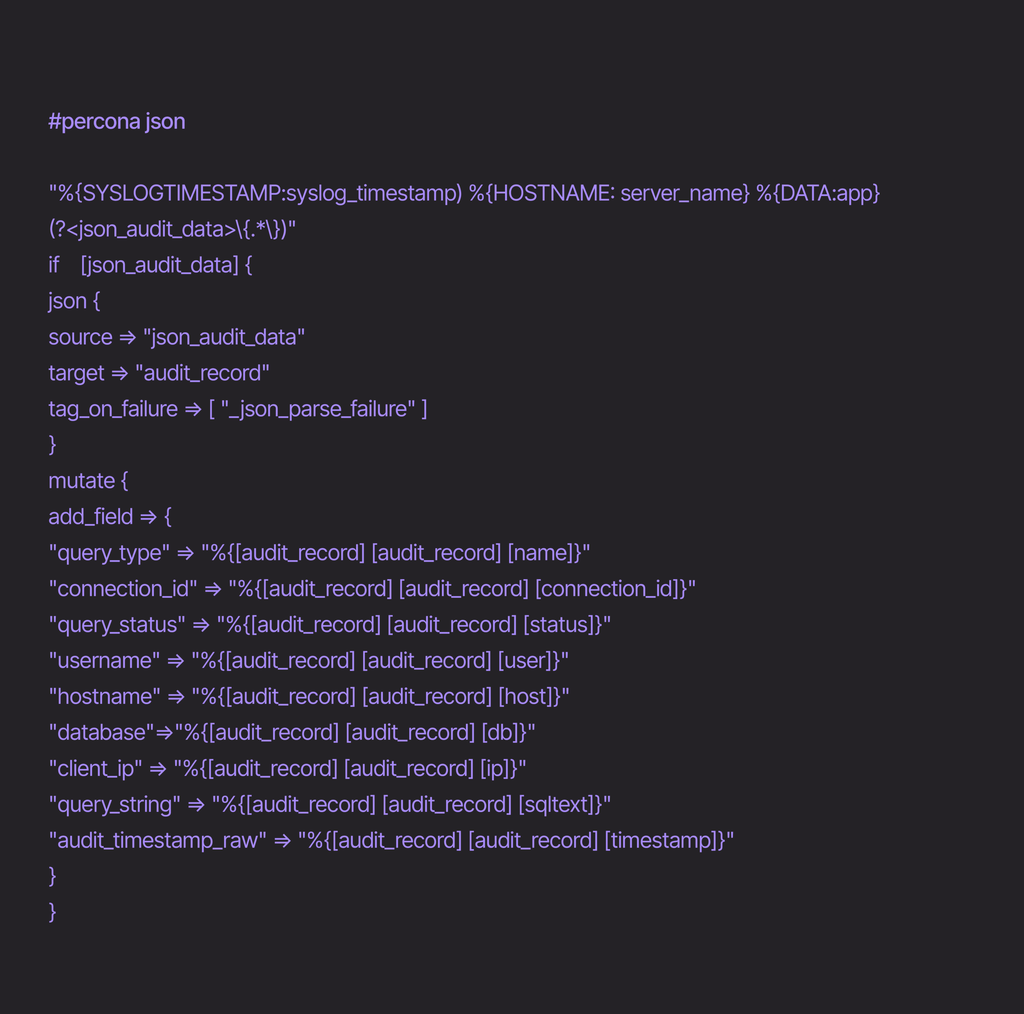

1. Parsing with GROK: Incoming log lines are parsed using a GROK pattern that extracts structured fields. Below are sample grok patterns used for various databases:

This transforms a raw syslog line into a structured document with fields like server_name, username, query_type, database, query_string, query_status.

2. Filtering and whitelisting: The pipeline drops events from known system and service accounts. Service users and known patterns are whitelisted through a carefully vetted and regularly reviewed process, including patterns like accounts ending in _ro, _rw, or _ssl. Connection lifecycle events (CONNECT / DISCONNECT) and non state changing queries are also dropped.

3. Fingerprinting for Deduplication: Each event is fingerprinted using a SHA256 hash of the composite key of several fields. The Logstash aggregation filter then applies a configurable deduplication window: the first event with a given fingerprint generates an alert, while identical events within the next configurable amount of seconds are marked as duplicates and are not raised as alerts.

4. Metadata Enrichment: Region, VRF and other metadata fields are added into each document, enabling filtering and team-based operations.

5. Output Routing: Processed events(alerts and duplicates) are routed to Elasticsearch indexes for storage, alerting and querying. Unique alert events are also written to a local alert log.

The Visualization Layer

The user-facing interface is served via a containerized architecture comprising two services:

Hoshō Backend, a Go-Gin based web server. The backend provides:

- REST API – a set of endpoints for document search, approval workflows, aggregations, graph data, user activity tracking, and rule visualisation. This includes querying elasticsearch with wildcard matching, range filters, and term aggregations for all search and dashboard operations.

- Async Scheduler – runs minute-level real-time checks and daily digest reports with timely alerts and provides notifications via slack alerts/mail/phone.

Hoshō UI, a frontend built with TypeScript, and Tailwind CSS. The UI provides:

- Alerts and Dashboards – Alerts with full query details, metadata, and approval history. With drill down charts and dashboards based on date and field filters.

- Export Option – Option to download CSV exports of alerts based on filters.

- Approval Workflow – Option to Acknowledge and Approve, with comment.

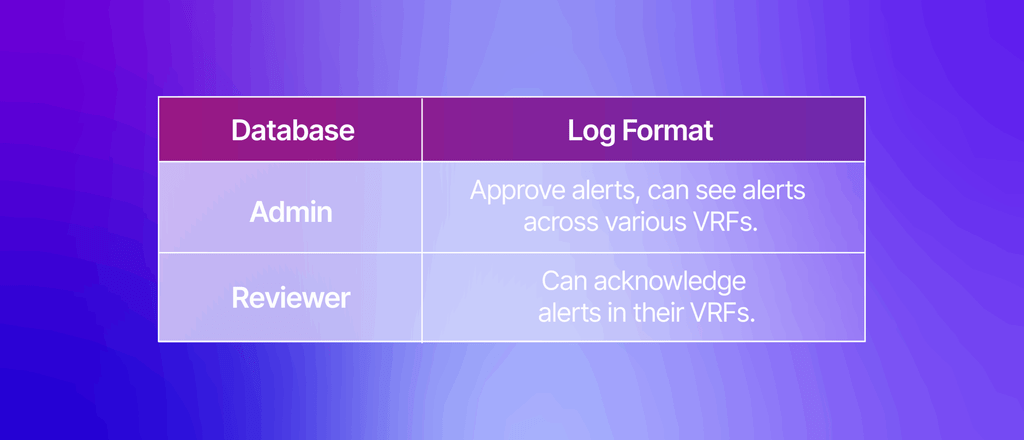

Role-Based Access Control

Hoshō implements a robust RBAC model that governs both API access and UI behavior:

Authentication flows through two paths:

- mTLS + Lua JWT (Non RBAC-enabled environments): OpenResty extracts the Common Name (CN) and Organizational Unit (OU) from client certificates, generates a JWT with email, roles, and region/VRF access claims, and sets it as a cookie. This approach enables certificate-pinned zero-trust access.

- OAuth (RBAC-enabled environments): OAuth2 flow with HMAC-SHA256 state validation, Groups integration for role mapping, and JWT token generation with 24-hour expiry.

The JWT access claim contains a region to VRF mapping, which the UI respects by populating the Region and VRF pickers exclusively with authorized values.

3. Post Processing:

The Post Processing step maps alerts into actionable, team-attributed audit trails.

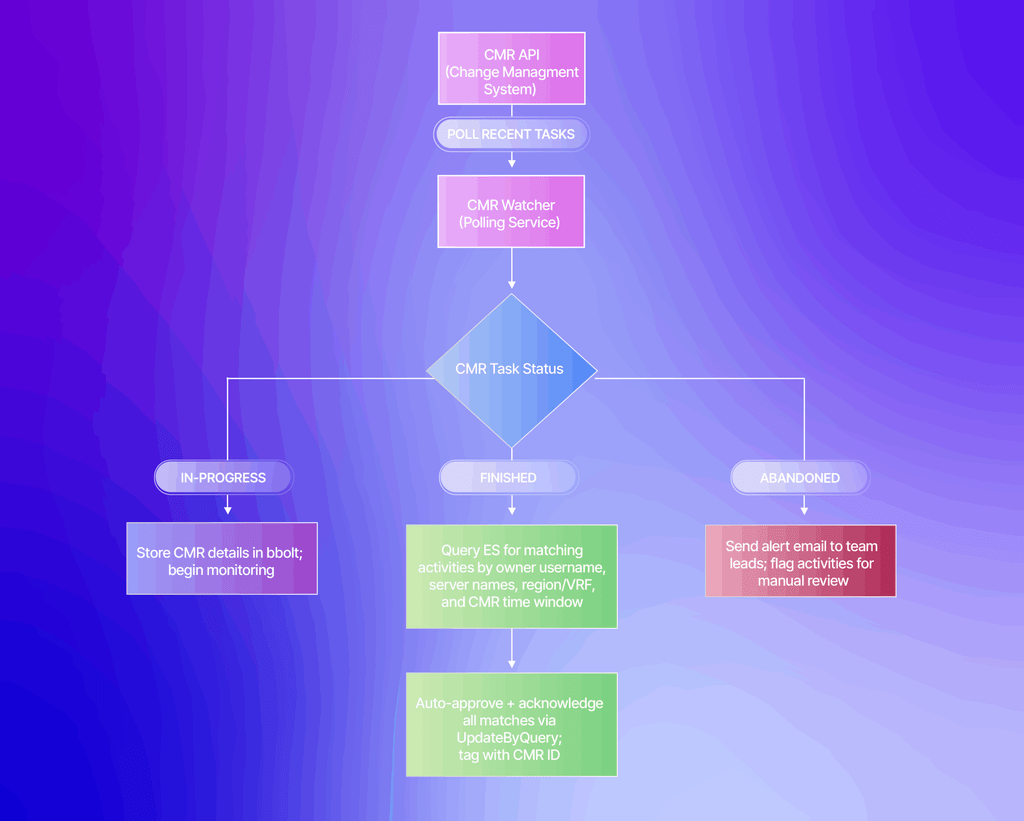

Change Management Request (CMR) Integration

The Change Management Request (CMR) process is key to tracking and approving production changes. In the context of Hoshō – the CMR details, the approved hostnames, the users executing the query, and the time window, provide Hoshō with the critical insight needed to automatically whitelist legitimate activities. This ensures that only database actions lacking a pre-approved change trail are flagged for manual review.

The CMR Watcher is an async service that periodically queries the PhonePe Change Management System API for recent tasks and subsequent changes and puts them in an embedded key-value store.

The reconciliation flow works as follows:

When a CMR completes successfully, the tool converts CMR owner emails to temporary database identity of the user, matches them against activities in Elasticsearch using the CMR’s time window and server scope (supporting both exact server names and regex patterns from the CMR’s hostlist), and performs an auto approval and acknowledgment.

Any database activity that does not fall within a valid CMR window i.e., lacks a pre-approved change trail is left prominently in the UI portal for mandatory manual review and acknowledgment.

Team Auto-Attribution via PhonePe Cloud

The backend integrates with PhonePe Cloud (PPEC) to automatically resolve team ownership for each VRF/region combination. Team metadata including group email, operations email, head of engineering, points of contact, managers, and Slack webhook URLs is loaded from an identity configuration. This enables:

- Automatic routing of daily digest emails to the correct team leads.

- Alert attribution to the responsible team without manual tagging.

- Slack notifications directed to team-specific channels.

Notification Strategy

Hoshō supports two notification cadences:

- Near Real-Time Alerts: A cron process checks (configurable minute, default: 1 minute) for new unapproved activities, and triggers alerts(email, slack and phone call) with the details of suspicious queries detected in the last reporting window.

- Daily Digest Reports: A daily cron (configurable hour, default: midnight) generates per-region summaries of all activities from the past 24 hours, grouped by team, and dispatches them to team leads.

Email payloads are structured with team-specific recipient lists, and content that includes query details, region context, and timeframe and associated CMRs.

Design Decisions Worth Noting

Several architectural choices in Hoshō are worth calling out for the engineering trade-offs they represent:

- SHA256 Fingerprinting over MD5: Chosen for its stronger collision resistance in the deduplication context, accepting a marginal performance cost for increased reliability.

- Configurable Deduplication Window: A carefully tuned balance (120 seconds), short enough to maintain near real-time alerting latency, long enough to suppress the burst patterns typical of batch database operations.

- bbolt for CMR State: Instead of external dependency for tracking in-flight CMRs, Hoshō uses bbolt — an embedded B+ tree key-value store. This keeps the operational footprint small for what is a fundamentally transient state.

- OpenResty + Lua for Auth at the Edge: By performing certificate validation and JWT generation at the reverse proxy layer, the backend remains auth-agnostic and can be tested independently. The Lua scripts handle CN/OU extraction, JWT signing, and cookie management — all before a request reaches the Go application.

- URL-Driven State in the UI: All filter states (region, VRF, date range, username, approval status, pagination) live in URL query parameters rather than local state or localStorage. This enables deep linking to specific views, browser back/forward navigation through filter states, and shareable URLs for incident collaboration.

- Decoupled Processing and Visualization Pipelines: The Logstash processing pipeline and the UI/API visualization layer operate independently. Either can be updated, scaled, or restarted without affecting the other — a critical property in a system that must maintain continuous audit coverage.

- Elasticsearch UpdateByQuery for Bulk Approvals: Instead of fetching documents, modifying them client-side, and writing them back, Hoshō uses Painless scripts in Elastic operations. This enables atomic bulk approvals of hundreds of alerts matching a CMR’s criteria in a single API call.

Security Aspect

As a security and compliance tool, Hoshō’s own security posture is carefully considered:

- Mutual TLS at the reverse proxy ensures only certificate-bearing clients can access the API.

- JWT tokens with 24-hour expiry and region/VRF access claims enforce fine-grained authorization.

- Role-based permission checks on every API endpoint prevent privilege escalation.

- CMR time-window scoping ensures auto-approval only applies to activities within the approved change window.

- Immutable audit trails, every approval and acknowledgment is recorded with timestamp, actor identity, and comment.

- No host-agent deployment means Hoshō cannot be compromised via a database host breach; it operates on log copies shipped through a separate data plane.

Conclusion

In a highly audited and compliant environment such as PhonePe’s, tools like Hoshō help with the visibility without the overhead of a host based agent model. While database access control and session management are handled by other dedicated systems in PhonePe’s security stack, Hoshō ensures that every action taken after access is granted, is monitored, attributed, and auditable.

The tool’s Funnel-based ingestion ensures reliable, buffered log collection across database technologies and cloud providers. The Logstash processing stage processes logs along with whitelisting, fingerprinting, and deduplication, ensuring that only actionable alerts surface. The CMR auto-approval integration closes the loop between change management and monitoring. The visualization layer gives teams interactive, filterable, role-scoped dashboards with export capabilities.

Hoshō stands as a scalable, plug-and-play, vendor-agnostic solution capable of being deployed in both centralized and distributed architectures to fit the security and compliance needs of any environment. It demonstrates that effective database activity monitoring doesn’t require invasive agents or vendor lock-in, just thoughtful architecture and a commitment to keeping audit trails complete, attributable, and actionable.