Engineering

Beyond IaC: Engineering a solution to Guarantee What Actually Is in Production

Aniruddha Thombre, Udeshya Giri, Shivam Pansy Satyendra Tyagi, and Ghanshyam Gupta17 April, 2026

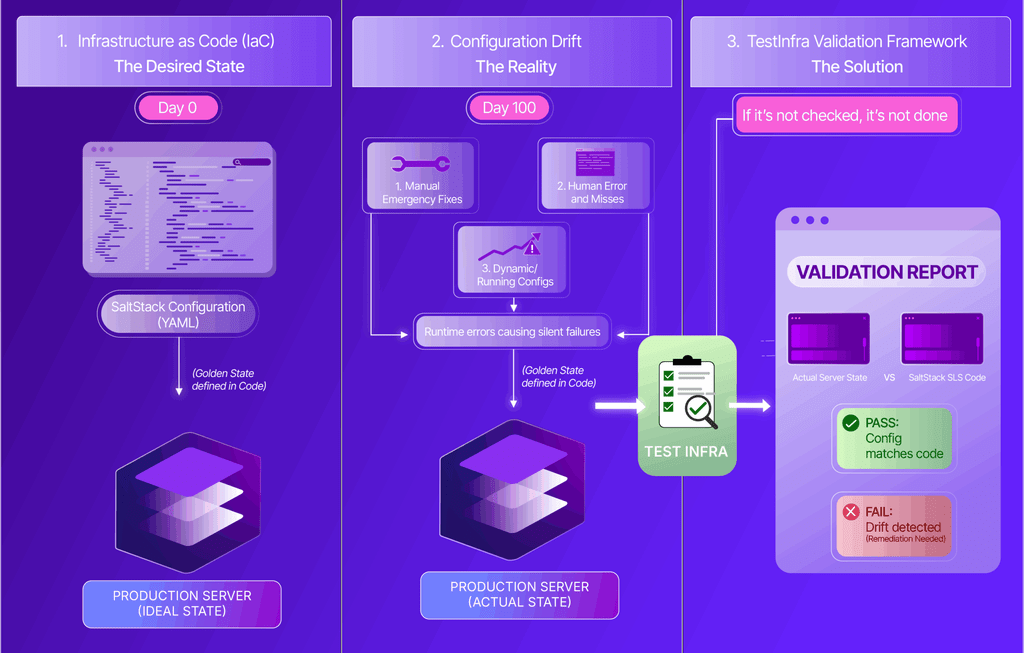

Infrastructure as Code (IaC) is often treated as the holy grail of Site Reliability Engineering (SRE). We use tools like SaltStack and Ansible, alongside golden images and post-install scripts, to define our ideal state. But here is the hidden secret of infrastructure engineering: IaC dictates how things should be, but it doesn’t guarantee what actually is.

Configuration states inevitably change over time. Manual emergency steps are applied during fire-fights, human errors and misses accumulate, and runtime errors cause states to fail silently. This is especially true for dynamic or running configs which do not come from config files. System state is not always derived solely from a high-state configuration or at times (edge cases) complete end-to-end automation. Furthermore, consistently applying high-state to every node is impractical at scale, due to both the volume of nodes and the complexity of the states involved.

Furthermore, one shouldn’t use the System Under Test to test itself! Who gives the grading privileges to the student who wrote the homework?

We realized that relying solely on IaC wasn’t enough; we needed to validate that the production systems actually reflected the code. It all boils down to one simple, uncompromising truth: if it’s not checked, it’s not done.

This is the story of how we retired our scattered scripts, overcame severe infrastructure bottlenecks, and built TestInfra—a robust, multi-environment validation framework that now executes over a million checks per environment.

The Dark Ages: The Operational Nightmare of Personal Favourite Scripts

Before TestInfra, our validation strategy was a fragmented mess of individual “favourite” scripts. But the problem went far beyond mere fragmentation—it was a massive operational nightmare.

- The Discoverability Problem: Scripts were scattered across Git repos or hidden as snippets in Salt configs. If you didn’t know a script existed, you couldn’t use it.

- The Duplication Problem: Teams were duplicating efforts, creating unstandardized checks with no reusable logic.

- The Hindsight Problem: There was zero historical tracking or hindsight.

- The Deployment Dilemma: If you want to validate thousands of servers, you absolutely do not want to ship test code and its dependencies to every single host and maintain them there. If you keep code and dependencies on all servers, the test/check versions themselves will inevitably start drifting, defeating the entire purpose of a unified validation architecture!

We needed a framework that was centralized, reusable, and didn’t involve reinventing the wheel.

The Crossroads: Choosing the Right Toolstack

Before we could pick a tool, we had to address how we would actually communicate with thousands of servers securely.

The Agent Restrictions: We had three main ways into our servers: SSH, Salt, and Wazuh. Each existing agent came with its own strict controls and operational issues. For example, we couldn’t simply use headless SSH users because of strict regulatory, compliance, and auditability restrictions. The same goes for the Wazuh agent, which is strictly under InfoSec custody. And for the reasons given above, we didn’t want to casually add more agents or new software on our servers, which would only increase our attack surface and maintenance demands.

With these strict operational and security constraints in mind, we evaluated several existing tools in the ecosystem to build this independent, centralized verification:

- Building our own framework: We briefly considered this, but the massive effort, reinventing the wheel, and unforeseen edge cases made it a big “NO”.

- InSpec/ServerSpec: These rely heavily on Ruby and RSpec. Since high-level APIs were missing or difficult to implement, extending them posed a significant challenge.

- Goss: Being YAML-driven, it is great for a small set of checks but cannot be easily extended and requires its own binary.

- Molecule: Difficult for complex logic, though advantageous in Ansible-heavy environments.

The Winner: Pytest-Testinfra. We chose Pytest-Testinfra because it operated seamlessly within our Python ecosystem. Crucially, it solved our operational and agent hurdles: it acts as a centralized runner that supports SaltStack, Ansible, SSH, and local execution backends out-of-the-box without requiring new agents. All our varied environments could use whatever transport (SaltStack, SSH, Ansible, Local) suits their needs! This meant zero test-code drift on the edge nodes because the logic stays central. It also allowed us to write checks in just 3-5 lines of code and cleanly separate our test logic from our test data.

Solving the Reporting Puzzle: Running tests centrally is useless without great visibility. We evaluated several alternatives, including Allure reports, our internal tools Magnus [software focus], Testopia etc. However, we ultimately integrated ReportPortal. ReportPortal won because it solved for massive scale, handled strict security requirements (authentication and authorization), and provided Machine Learning-driven auto-analysis. This integration became so reliable that we used it to confirm the sanity of our Disaster Recovery (DR) sites both before and after major migrations. The beauty of this solution was, we never had to execute checks to answer questions like these: “Is my DR site ready right now?” as the solution already provides answers to questions at any given point in time. The worth of these architectural decisions was evident when we had to conduct real DR anticipating a large-scale disruption on one of our production sites. The environments did not see any glitches during this impromptu DR exercise. The proactive checks helped us steer through that proactive DR drill without any major preparations.

Scaling Up: Hitting the Million Milestone

Once the foundation was laid, adoption skyrocketed. The framework’s numbers are now wild:

- We operate in 25+ diverse environments with 20+ active contributors.

- We have written over 15K+ lines of code—a robust foundation almost equal in size to the upstream Testinfra repository itself!

- Through aggressive data-driven parameterization, our 390+ logical test cases expand dynamically into tens of thousands of test executions.

- In just one of our smaller environments, this translates to about a million unique test runs within a few months. Is it overkill? Maybe… Maybe not if you want sanity all of the time.

We heavily emphasized the DRY (Don’t Repeat Yourself) principle , building custom utility modules (like RabbitMQ, MysqlClient, Daemontools, AerospikeClient [and few more very specific to PhonePe customizations]) to abstract complex logic away from the raw test files.

The Boss Fight: The SaltStack Event Bus Bottleneck

As we scaled, we hit an architectural wall. Our validation checks relied on SaltStack’s primary event bus to push execution tasks, which became fatally congested. Minions would simply stop responding in larger environments, leading to aggressive execution timeouts.

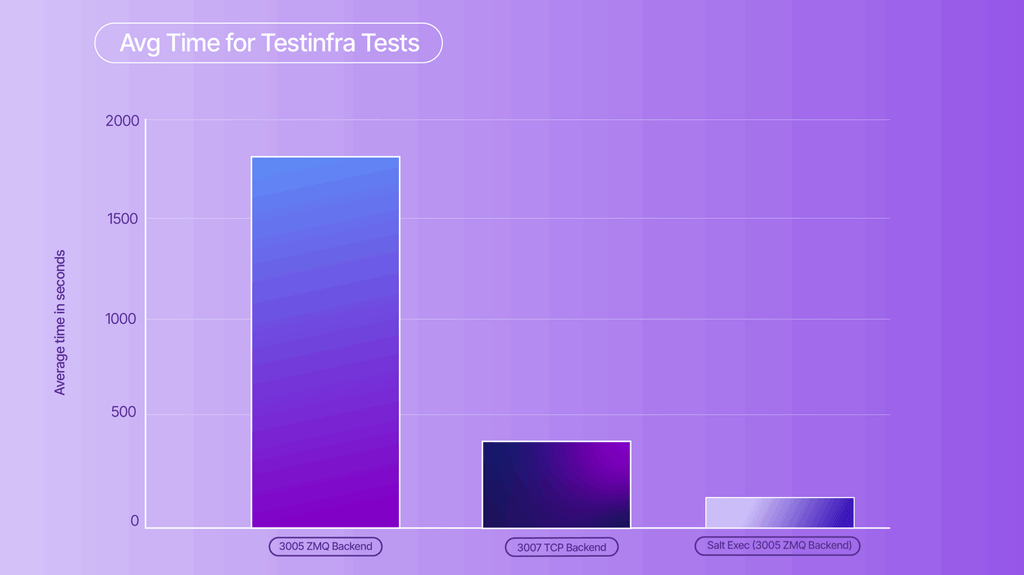

Attempt 1: The TCP Transport Experiment Salt provides two transport mechanisms: ZMQ (the default) and TCP. We tested moving from ZMQ on Salt 3005 to TCP on Salt 3007. The results were initially mind-blowing: an average 70% reduction in execution time and almost zero timeouts.

However, it was a trap. Salt 3007 comes with a “onedir” installation that bundles Python 3.10, whereas our Focal environments use Python 3.8. This caused severe dependency mismatches (specifically with bernhard and protobuf) that broke our existing automation scripts. Furthermore, we were completely unable to revert back to ZMQ once TCP was enabled, and we started seeing 5% of Salt calls failing with “Nonce verification errors”. We also came up with internal tooling for saltstack profiling and auditing (SaltLens) Although not relevant to this discussion, we may very soon write another blog for that ecosystem.

Attempt 2: External Job Cache We tried offloading work by setting up the Salt master with an external job cache backed by PostgreSQL / MariaDB. This had no effect whatsoever on performance; execution times did not improve, and timeouts did not decrease.

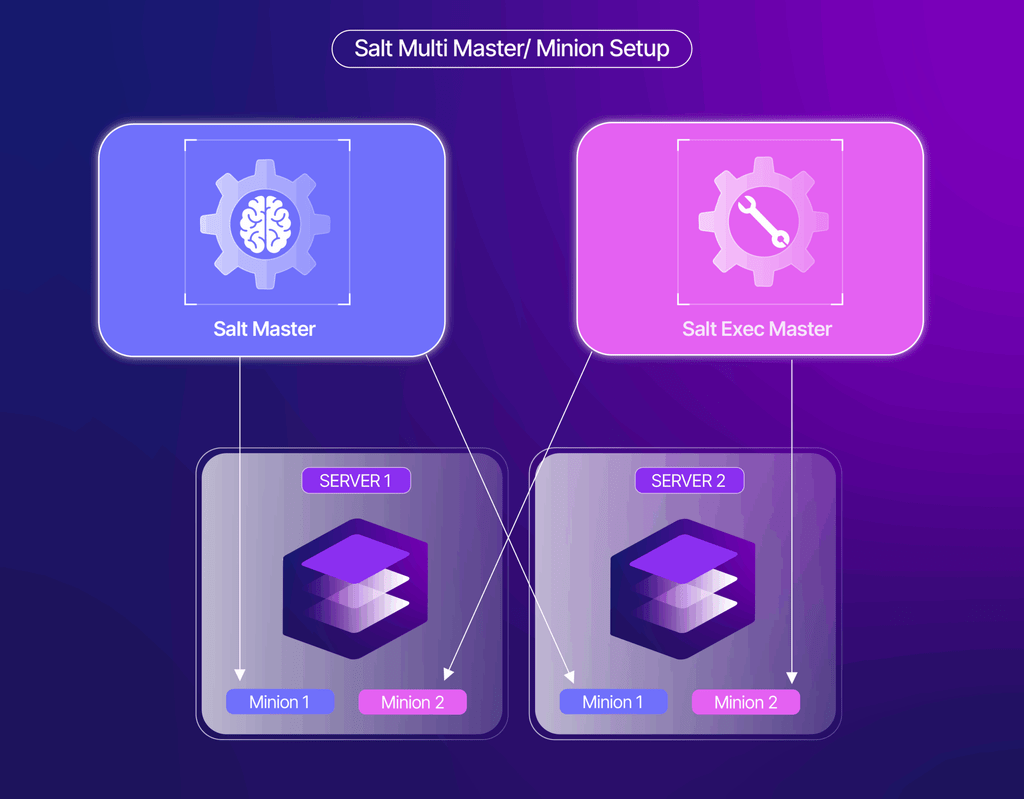

The Final Solution: The “Salt Exec” Multi-Master Workaround We needed a solid backbone to push commands without meddling in the existing fragile setup, and without forcing a massive production cut-over.Our breakthrough was implementing a multi-master, multi-minion setup specifically for execution. We spun up a dedicated salt-exec node with specs mirroring the primary master.

- This secondary master holds no states or pillars.

- It does not impact the existing master-minion relationships.

- It provides a separate execution event bus and a dedicated entry point for our automation frameworks.

By isolating the execution traffic, our average time for Testinfra checks plummeted drastically from nearly 1800 seconds down to around 115 seconds, bypassing the congested primary bus entirely.

The Bigger Picture: Inspiring a Cultural Shift

The success of TestInfra proved something far more valuable than just execution speeds: it proved the immense power of our collective wisdom.

Previously, our restructuring into specialized pods brought deep focus but naturally created knowledge silos. Finding the right internal expert or a pre-existing tool had become difficult. TestInfra broke those barriers by bringing together experts from various pods to collaborate brilliantly and create something universally valuable.

This cross-pollination was so successful that it became the catalyst for our next major organizational evolution: SRE Forge.

Enter SRE Forge: Our Internal Open-Source Framework

Inspired by the project lifecycle and collaborative guidelines of the Apache Software Foundation (ASF), SRE Forge acts as our “Great Librarian.” It is a centralized hub designed to systematically harness the talent scattered across our teams, moving us from solo acts to working like a unified beehive.

SRE Forge operates through a structured JIRA project with three main pillars:

- The Idea Forge: A creative hub to share raw ideas, get feedback, and find collaborators before writing a single line of code.

- Incubation Projects: A structured path where ideas grow into real, robust projects, passing through strict quality gates overseen by Architects to prevent unmaintainable tools from hitting production.

- The Swiss Army Knife: A shared toolkit for discovering and utilizing practical, everyday utility scripts.

The Road Ahead! चरैवेति चरैवेति ॥

आस्ते भग आसीनस्योर्ध्वस्तिष्ठति तिष्ठतः । शेते निपद्यमानस्य चराति चरतो भगः ॥ चरैवेति चरैवेति ॥

The fortune of a person who sits, sits as well. It rises when he rises; it sleeps when he sleeps; it moves only when he moves.

Therefore, keep moving! Keep moving!

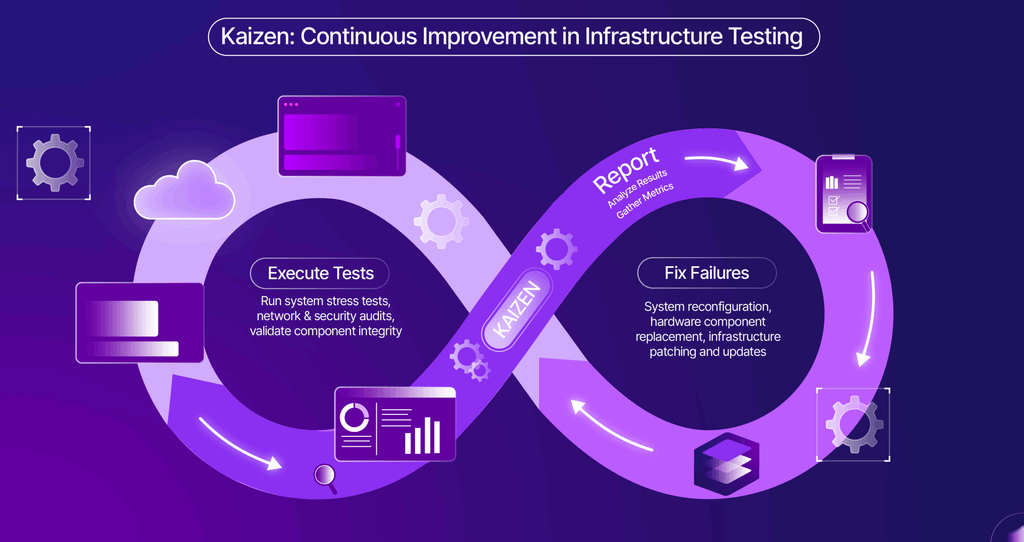

Now that we have atomic checks, we could build a large repo of atomic SOPs for each of the failures which currently is serving as a stepping stone for automation of fixes for specific categories of failures utilizing the exact same collaborative approach.

Closing Thoughts:

By hunting down configuration drift with TestInfra, we didn’t just validate our infrastructure—we validated the culture of open collaboration. This transition shifted our mental model from reactive firefighters to proactive gardeners who cultivate and sustain a resilient infrastructure. TestInfra showed us what happens when we stop reinventing the wheel and start building together; now, any project destined for production across multiple pods must pass through the SRE Forge lifecycle and benefit from collective wisdom.

Resources & Tooling Mentioned

If you are looking to build or enhance your own infrastructure validation and automation pipelines, here are the official links to the open-source tools and frameworks we evaluated and used during our journey:

- Testinfra (Pytest-Testinfra): https://testinfra.readthedocs.io/

- Pytest: https://docs.pytest.org/

- ReportPortal: https://reportportal.io/

- SaltStack: https://saltproject.io/

- Ansible: https://www.ansible.com/

- Molecule (Ansible testing): https://molecule.readthedocs.io/

- ServerSpec: https://serverspec.org/

- InSpec (by Chef): https://www.inspec.io/

- Goss: https://goss.rocks/ (or https://github.com/goss-org/goss)

- Allure Reports: https://allurereport.org/

- Sphinx (Documentation): https://www.sphinx-doc.org/

Pro Tips:

- No framework or tool will match your exact requirements, find those which you can modify or extend. Keep licensing in the forefront of decisions.

- Categorize your checks based on System Under Test, types of checks (dynamic/run time vs static configs).

- Divide and Rule… Separate your tests, test data (Parameters), Logic (modules ), and target (System Under Test) to keep your code clean.

- Use in code documentation, sphinx (or other doc generation tool) to generate a live documentation website to minimize the learning curve for all your new contributors.

- Separate projects and automated issue tagging in ReportPortal is useful for visualization as well as scheduling the fixes.